Defensive manoeuvres: the vaccines the military made

Generals have understood for centuries that vaccines make for a good defence, and many modern vaccines can trace their origins to military-led research programmes. VaccinesWork spoke to Dr Kendall Hoyt about what we can learn from World War II-era light-speed R&D.

- 2 April 2026

- 14 min read

- by Maya Prabhu

At a glance

- In most conflicts across most of history, diseases killed more soldiers than weapons did. Vaccination has been recognised as an invaluable defensive strategy by military commanders for centuries.

- Military research programmes have played critical roles in the development of many new or improved vaccines, with the American military investment in vaccine R&D during and after World War II standing out for its unparalleled string of achievements.

- How did they do it? Researcher Dr Kendall Hoyt talked us through the ingredients for successful mission-driven R&D.

War is a losing game. At least, it’s a game of losses: inflict as many as you can, sustain as few. A good defence is whatever it takes not to get blown up, shot down, or sick.

That last one is important. Disease has on many occasions undone or upended raw firepower advantage.

Malaria brought down Alexander the Great, stalling his juggernaut campaign of world conquest definitively somewhere in modern-day Punjab. Yellow fever helped a strategically proficient[1] but initially poorly-armed rebel slave force beat the professional soldiers of the French, British and Spanish imperial armies out of Haiti. In Russia, Napoleon’s Grande Armée, the largest army Europe had ever seen, was all but rubbed out by cold weather and a tripartite bacterial offensive.

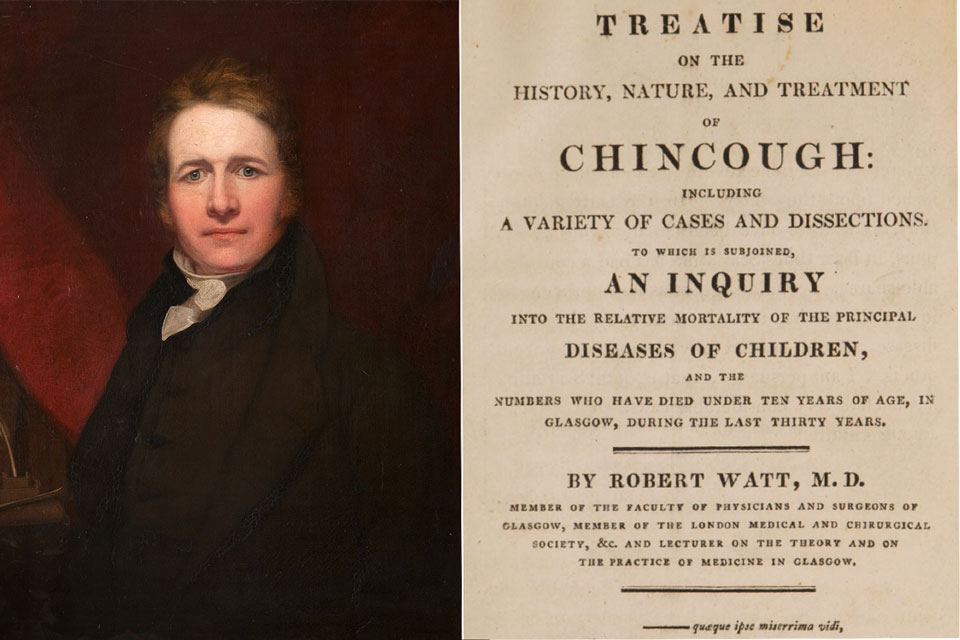

In fact, in every major conflict on record until at least 1870[2], pathogens killed more combatants than weapons did, and often by extraordinary margins[3]. No wonder: barracks and frontlines produce ideal conditions for epidemics. Large groups of men with stressed immune systems cluster in cramped and typically relatively unhygienic environments, often far away from the pathogenic ecosystem that informed the development of their bodies’ natural defences.

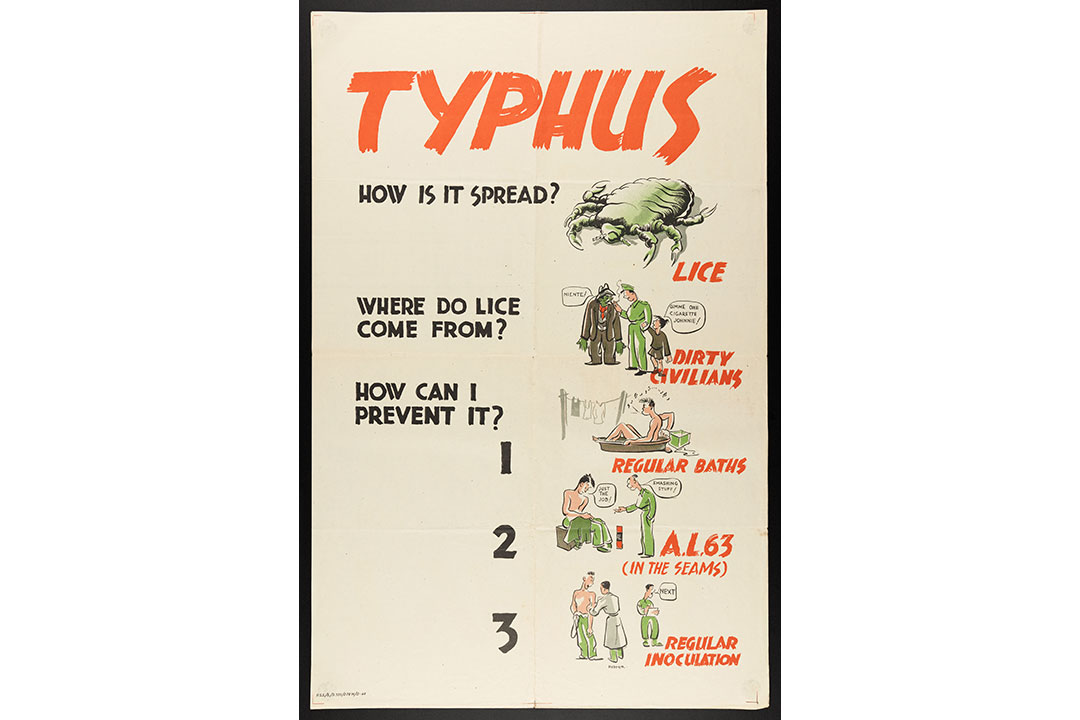

Source: Wellcome Collection.

But over time, the mortality ratio began to tilt more frequently in favour of injury[4]. Warfare was modernising: weaponry was getting more destructive, and scientists and medics were getting better at saving people from illness.

Strategic immunity

Military commanders cottoned onto the utility of immunisation pretty quickly.

Here was a technology with the potential to cancel out utterly the threat of particular epidemic diseases, curtail casualties and loosen the tactical hobble of soldier sick-days.

In fact, in 1777, almost two decades before Edward Jenner discovered the smallpox vaccine, General George Washington weighed the risks and ordered the variolation – a far more dangerous process than vaccination, because it deployed the unadulterated smallpox virus as an immunising agent – of every soldier in the Continental Army. “Should the disorder infect the Army in the natural way and rage with its usual virulence we should have more to dread from it than from the Sword of the Enemy,” he wrote.

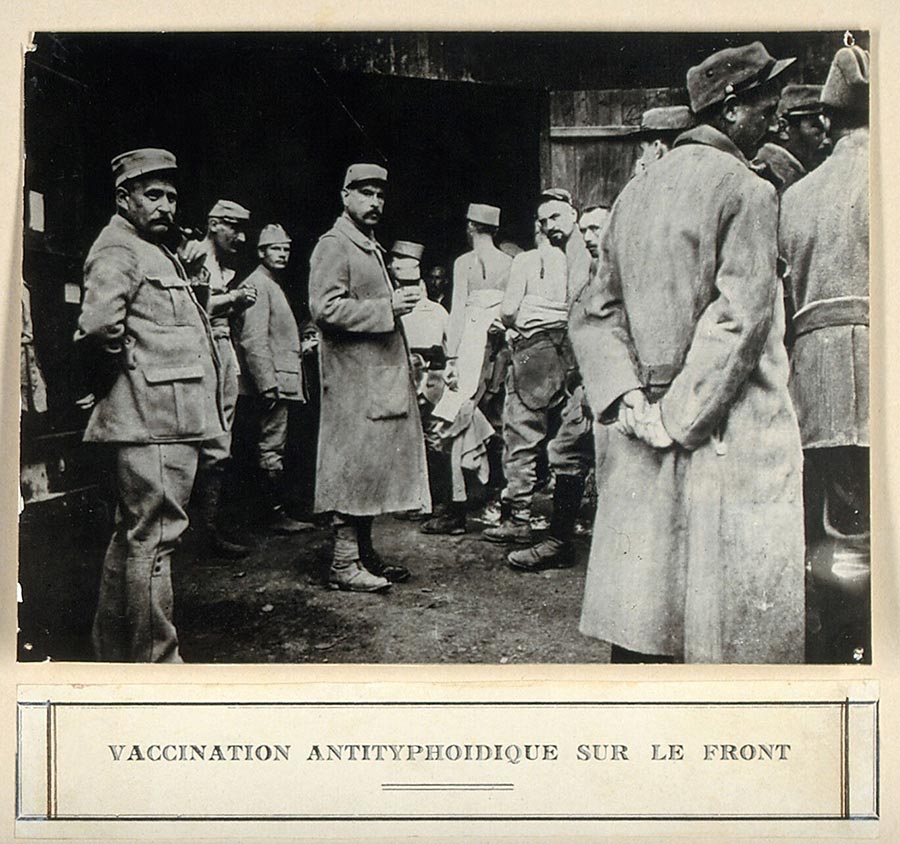

Today, most armies mandate vaccination against certain pathogens for their troops. Several countries’ defence programmes have made, and continue to make, important investments in vaccine development to boost readiness against both naturally-occurring threats and against bioweapons. Several of the former category of vaccine have eventually emerged into the civilian world, to general benefit.

The golden age of military vaccine R&D

For innovation in immunisation, the United States military’s record stands out. The Department of Defense continues to invest in vaccine development today, but the extraordinary green wave of vaccine R&D achieved by World War II-era programmes will be tough to repeat.

Dr Kendall Hoyt, an associate professor at the Geisel School of Medicine at Dartmouth and the author of Long Shot: Vaccines for National Defense, has extensively studied that golden age. She writes that military-led research resulted in the development of “new or significantly improved vaccines for 10 of the 28 vaccine-preventable diseases identified in the 20th century.”

Fresh war in Europe marshalled attention to the lessons of the Influenza Pandemic of 1918, which had emerged at the tail-end of last great conflict to ultimately kill 50–100 million people worldwide. American soldiers weren’t spared: some 20–40% of enlisted men fell ill and tens of thousands of soldiers and sailors died, with those fatalities accounting, by one estimate, for more than 80% of the total war casualties sustained by the American army.

Fears of a similar disaster prompted vigorous spending. Between just 1940 and 1942, funding for US Army medical research rose by more than 100%.

And almost immediately, defence-led research vaccine programmes were justifying the investment, notching up unprecedented wins. The very first flu vaccine took just 19 months to go from programme inception to approval for military use. The typical window for vaccine development was four times that on the speedy side.

Source: Wellcome Collection.

The first vaccines against pneumococcal pneumonia, influenza, Japanese encephalitis and botulinum toxoid would emerge out of similar programmes in the next years and decades. Military programmes wheeled out improved yellow fever, cholera, plague, smallpox and tetanus vaccines; an all-new typhus vaccine replaced a now-defunct one.

But by the 1980s, that unparalleled streak of innovation tailed off. What had changed?

The defensive bloc

A better question: how had they done it in the first place?

The extraordinary achievements of post-WWII defence-led vaccine development had less to do with scientific inspiration than the creation of a uniquely efficient, mission-driven innovation system, Hoyt told VaccinesWork in a recent interview.

Investment in basic science was a precondition. In the first half of the 20th century, many vital discoveries had been made – various principles for killing or weakening pathogens had been trialled, more and more pathogens had been successfully isolated and grown in labs around the world – but those advances in many cases still needed to be turned into workable tools.

The military tackled that principally by creating a series of highly-focused commissions, with names like the Commission on Air-borne Infections and the Commission on Measles and Mumps.

Have you read?

Handed a clear R&D objective, the commission’s director was given the necessary material resources and a great deal of top-down decision-making freedom in order to get there – but only there. In 1945, James Conant, outgoing director of the National Defense Research Committee, summed up the rationale: “There is only one proven method of getting results in applied science – picking men of genius, backing them heavily, and keeping their aim on the target chosen.”

The “men of genius” in question were not typically enlisted men. The military did have in-house scientists operating in critical roles in and across vaccine development programmes, but the top infectious disease specialists in the country were contracted to lead research.

In most cases they did so from inside their home institutions – often leading universities – on a part-time basis. That made an alliance between academia and the military structural to the vaccine-development programmes.

A third necessary party to that alliance was industry, which dedicated considerable resources to the production of vaccines for the armed forces without hope of profit. War was looming: duty called. Contracts with both researchers and companies were issued on a “no loss, no gain” basis. That sense of patriotic obligation outlived WWII: “pharmaceutical companies looked at vaccine divisions as a public service, not as huge revenue generators,” virologist Don Metzgar told Hoyt, describing cold-war era agreements.

Free, focused, fast

The urgency and, crucially, temporariness of the wartime moment greased all sorts of wheels that might otherwise have squeaked. That, and other historically-contingent factors, helped build a “uniquely generative culture”, in Hoyt’s terms.

Intellectual property concerns barely factored. Until the Bayh-Dole act of 1980, the government retained ownership of any IP developed with federal funding; patenting, licensing and associated litigation weren’t an issue. It was common, Hoyt notes, for scientists working in the military programmes and their friends in industry to share samples and research results.

The men pushing the achievements of the era were, or became, a set. They emerge in their letters to each other: colleagues and pals, clever, impatient, grandiose and, importantly, unencumbered by many of the considerations that make science safer today.

When Vannevar Bush, longtime director of the Office of Scientific Research and Development (OSRD), was asked to join Merck’s Board of Directors after World War II, he scribbled back paragraphs of wisecracking invective against medical ethics: “I don’t suppose we would get into a lot of medical ethics; I’m sure I couldn’t take that. I’ve had measles and flu and a lot of things that ordinary people have, but I’ve never had ethics,” he wrote. “If you think there’s any danger of infection we ought to quit right here.”

Picking up the baton: pneumococcus vaccines

Scientists had known a pneumococcal vaccine made from purified pneumococcal capsular polysaccharides could produce immunity in mice since 1927. In the 1930s, researchers at the Rockefeller Institute had even shown that this novel kind of vaccine could immunise humans. Then, in 1939, the antibiotic sulfapyridine became available, making pneumococcal diseases a curable concern. Vaccine R&D flagged.

But for an American military facing a new war in Europe, vaccination made more sense than treatment. Treatment takes time; sick days theoretically mean gaps on the frontline. In 1942, the military-led Commission on Pneumococcal Diseases took up the research baton.

By 1944, enough lots of the vaccine had been produced out of the labs of pharma company E.R. Squibb and Sons for a test-run. Some 17,000 men at the Army Air Force Technical School in Sioux Falls, South Dakota, were enrolled in a randomised, placebo-controlled trial. In 1945, at the trial’s end, the jab was declared a success, with the study showing “that epidemics of pneumococcal pneumonia in closed populations could be terminated within two weeks”, in the words of Michael Heidelberger, a scientist in the commission.

End of the era

“There was a culture that was highly generative for a period of time. But then things changed,” Hoyt told me. “It had to do with demilitarisation post-Vietnam, and moving a lot of the funding and the Center of Excellence out of the military and into the NIH, which was no longer team-based and now investigator-led research, which is a completely different culture.”

A cohort of standout scientists who had achieved big things in military research programmes were offered better pay in industry, and took it. “And at the same time, you know, we had Bayh-Dole, right? So now there’s going to be IP coming out of academia, and that freewheeling sense of, ‘we’re going to share our information and work as teams to develop these vaccines’ changed.”

Industry became more financialised. The risk of litigation meant vaccine divisions could no longer afford sliver-thin profit margins. “It was a fragile business, and so you start to get consolidation. People divested from vaccines, and you started to get product-line monopolies,” Hoyt said.

Canada’s Ebola shield goes civilian

Though larger outbreaks are becoming more common, Ebola is still comparatively rare, making the commercial risk of investment for market-led vaccine manufacturers a steep one. But it’s also terrifying, winning it a spot on many countries’ bioweapon watch-lists – and national security is a great alternative to the profit motive.

By the time the West African epidemic of 2014 struck, a number of candidate vaccines were percolating through various defence-linked development programmes. One of the most advanced at that time was rVSV-ZEBOV, developed by Canada’s National Microbiology Laboratory. As the outbreak gathered pace, Canada’s federal government donated its candidate vaccine for use in Africa, licensing its manufacture to NewLink Genetics and Merck, Sharpe and Dohme (MSD).

By 2015, doses of vaccine were available to trial in Guinea, and 12,000 contacts of Ebola patients were enrolled. The vaccine’s measured efficacy in a ring vaccination strategy was 100%.

But still, the commercial incentive to ferry the vaccine through the many, expensive stages of licensure was still slight. So Gavi stepped in, offering a promise to purchase doses of licensed vaccines once those were ripe for market, provided manufacturers agreed to build a stockpile of their investigational jab for emergency use prior to licensure.

MSD stepped up. In 2018, the game-changing stockpile of vaccine proved itself in the DRC. The vaccine was prequalified by the World Health Organization in 2019. It now preventively protects health workers in high-risk places.

Can you bottle R&D lightning?

History refuses to be wound back on its spool. The economic, political and legal environment we live in is altered for good.

But Hoyt says that “the original engine” that powered the post-WWII vaccine-development flurry can, under very specific circumstances, be mimicked with modern parts.

To reverse-engineer a blueprint for this kind of accelerated innovation, Hoyt and colleagues dissected not only the vaccine development programmes of the 1940s–1960s, but the Manhattan Project, the Apollo project and Operation Warp Speed, which produced a COVID-19 jab on a time-scale that would have impressed even Vannevar Bush.

“It’s called mission-driven R&D,” Hoyt said. To qualify as a “mission”, the project needs to be a well-defined technical problem that’s also time-limited.

Mission-driven R&D doesn’t depend on military involvement, but it does require a martial kind of concentrated command: “You need strong leadership, a sort of uncommon coordination. Like what we had with Moncef Slaoui and General Perna. They’re told they can operate, that they have authority to operate across budget lines and across departments and across sectors. They are told to make mistakes – they are allowed to take risks.”

Next, it needs tremendous amounts of money: “You don’t want to nickel-and-dime that endeavour, so it has to be a national priority – somewhat existential.”

Deep pockets allow for many simultaneous efforts. “You need to take multiple shots on goal – you have to manage risk in your portfolio,” Hoyt explains. Operation Warp Speed backed several vaccine candidates at once, and those candidates included different vaccine platforms.

And finally, to arrive at a state of flow, mission management requires both “push” incentives – an up-front grant, say – and “pull” incentives, the promise of a reward for achievement.

So, it’s not impossible. But it’s also not cheap, not easy, not applicable to every critical problem and, once achieved, necessarily perishable. Where does that leave us?

Invasion-ready: the US army’s Japanese Encephalitis vaccine

It was the mid-1940s, and what had begun as a European war was all over the Pacific.

A new theatre of war meant new pathogenic threats. Scientists in Japan had isolated the deadly, epidemic Japanese Encephalitis Virus (JEV) for study in the lab already by the mid-1930s, and would license an impactful vaccine for human use in 1954. But the American military was preparing for an east Asian land invasion, and they needed their own shield, promptly.

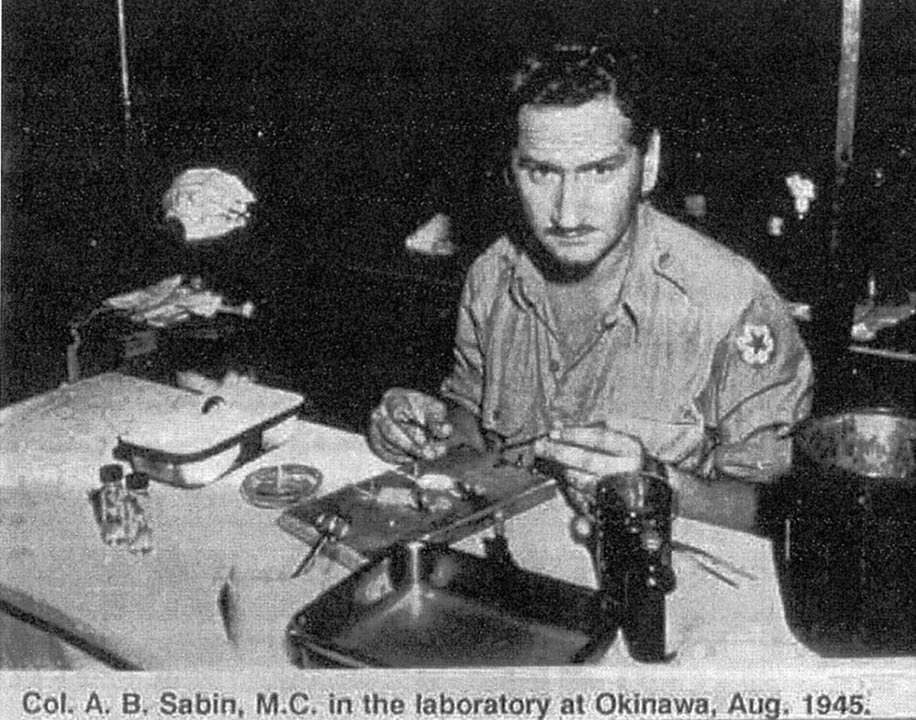

Major Albert Sabin – most famous today for his oral polio vaccine – had been tapped to lead the American JE vaccine sprint. Within just 15 months, he and his team produced a vaccine: a solution of mouse brain infected with formalin-killed virus. By the end of WWII, 250,000 military personnel had received it.

That first vaccine was replaced by better iterations in subsequent decades, which continued to protect American soldiers in Asia during the Cold War. Like polio vaccines, JE vaccines were also wielded as a tool in the soft-power “battle” for hearts and minds, rolling out to civilian populations in contested territories.

Building up the rainy-day fund

“Basic science is money in the bank,” Hoyt says. “That’s your rainy-day fund.” The next mission’s moment will come, and it will instrumentalise the discoveries we’ve made in the meantime.

“This is what’s behind the whole prototype vaccine initiative that originally came out of Barney Graham at the NIH, that CEPI is now spearheading,” Hoyt says.

We will see another pandemic. To make sure the scientists and governments who lead the missions against it have the tools they will need to move as fast as we’ll all want them to, CEPI is building vaccines against pathogens that exemplify some or all of the worst traits of viral families with pandemic potential, while also developing the clinical trial and manufacturing networks that will support them. “What they’re doing is preparing the groundwork,” says Hoyt.

Ask any general: a good defence means not getting caught with your pants down.

The war vaccine that wasn’t

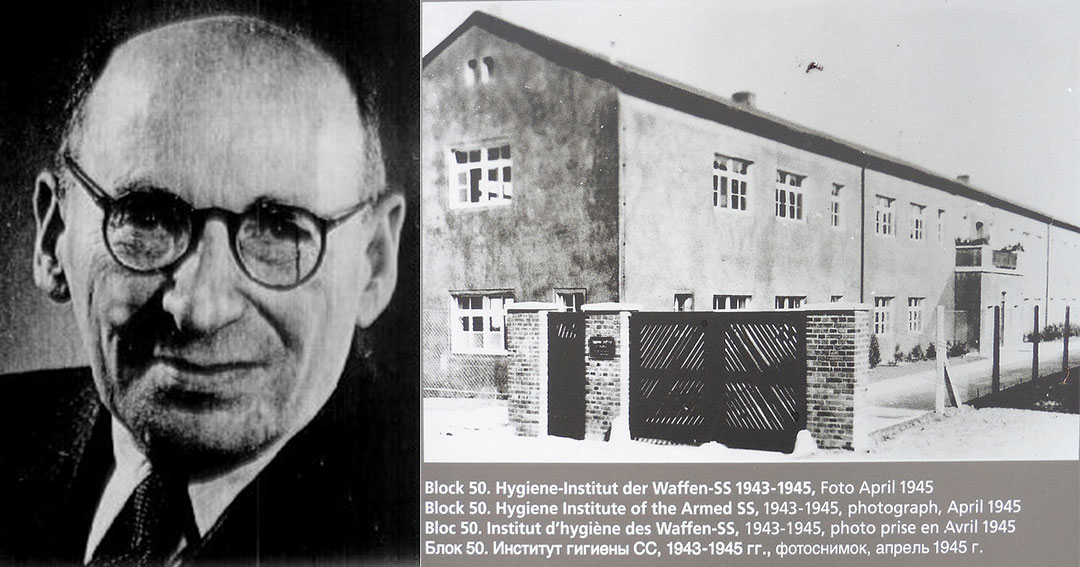

When Ludwik Fleck, a Jewish microbiologist, was sent to Auschwitz in 1943, the Nazis thought they could compel him to manufacture doses of the novel typhus vaccine he had developed, to protect German soldiers on the frontline. They got a whole lot less than they bargained for.

Revisit the extraordinary story of a rag-tag team of concentration camp inmates and their brilliant vaccine con.